OpenAI Codex Gets Computer Use, Browser and Image Gen

OpenAI Codex adds computer use, browser, image gen via gpt-image-1.5, and 90+ plugins as 3 million developers use it weekly, starting April 16, 2026.

What to Know

- 3 million developers use OpenAI Codex every week, nearly one year after the app first launched

- New computer use lets Codex see your screen, control the cursor, and type inside any Mac app — multiple agents run simultaneously

- Image generation powered by gpt-image-1.5 is now built in, with no API key required and usage covered by a ChatGPT account

- 90+ new plugins added including Atlassian Rovo, GitLab Issues, and the Microsoft Suite — personalization and computer use not yet live in the EU or UK

OpenAI Codex is no longer just a coding assistant. On April 16, 2026, the company announced a sweeping update to the Codex desktop app that adds background computer use, an in-app browser, native image generation, and more than 90 new plugin integrations — a combination that looks a lot less like a feature drop and a lot more like the opening move in a full-on AI operating system war.

What OpenAI Added to Codex

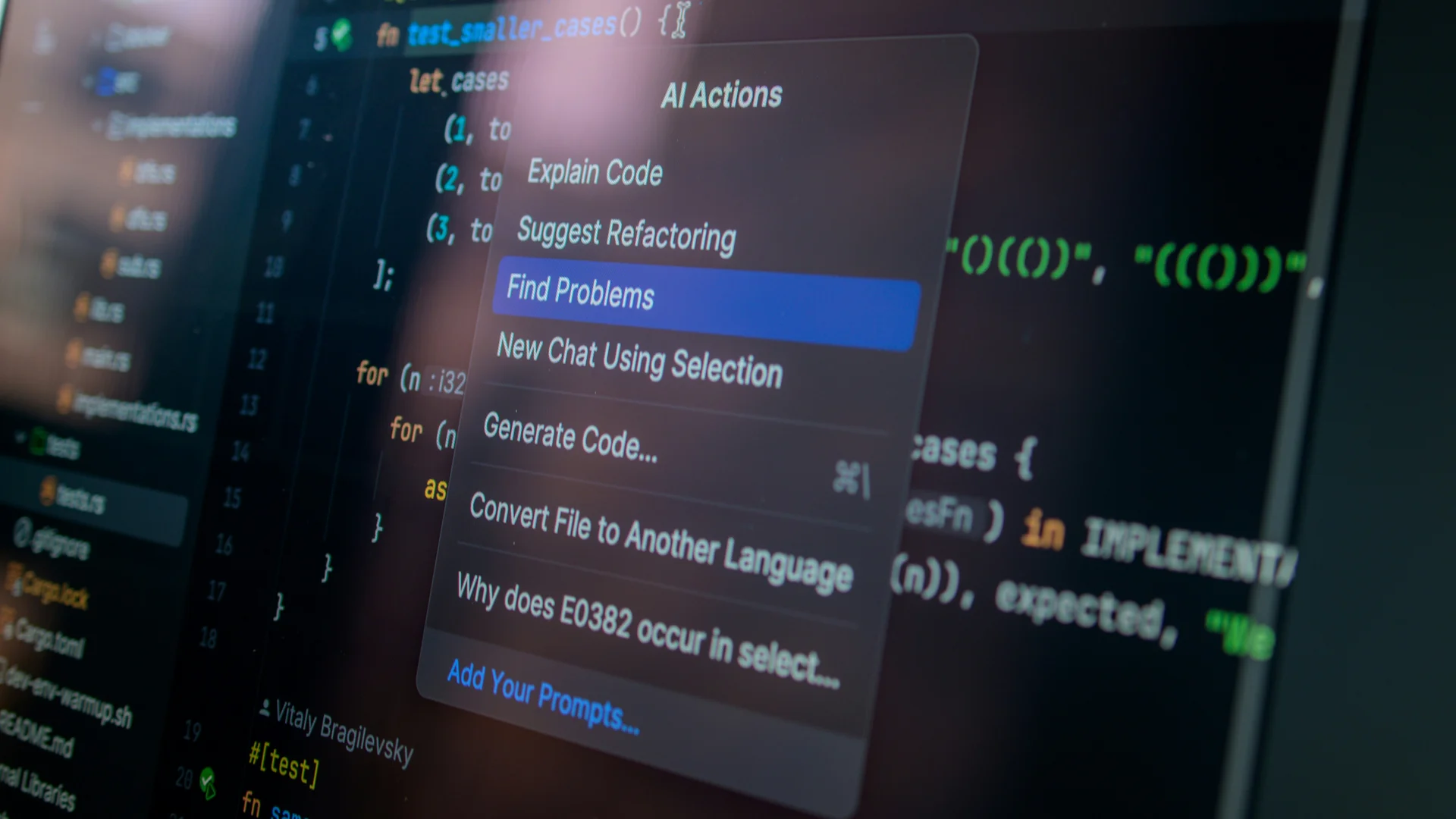

Nearly one year after OpenAI Codex first shipped, the company is done playing it safe. The headline addition is background computer use — Codex can now see your screen, move its own cursor, and click and type inside any Mac application. Multiple agents can run at the same time without interrupting whatever you have open. OpenAI says the feature is most useful for frontend iteration, app testing, and workflows that don't surface a clean API.

The in-app browser adds another layer. Users can drop comments directly on web pages rather than trying to describe a UI change in plain text. OpenAI says the current focus is frontend and game development, with full browser control planned for later. It's a sensible sequencing — prove it works in constrained domains before opening it up.

Workflow improvements round out the release: multiple terminal tabs, GitHub PR review comment handling, SSH connections to remote devboxes (currently in alpha), and a summary pane that tracks agent plans, sources, and artifacts in one place. Files open in the sidebar with rich previews covering PDFs, spreadsheets, slides, and docs. OpenAI says the intent is reaching 'a level of quality previously only possible through extensive custom instructions' — which is a polite way of saying the app should stop needing babysitting.

90+ Plugins and Image Generation Built In

The computer use update ships alongside more than 90 new plugins — integrations covering Atlassian Rovo, CircleCI, CodeRabbit, GitLab Issues, the Microsoft Suite, and Neon by Databricks. Each plugin can mix skills, app connections, and MCP servers, letting Codex reach across a developer's existing toolset without extra configuration steps.

Image generation is now baked in too, powered by gpt-image-1.5. No separate API key. No tab switching. Usage is covered by a standard ChatGPT account. For developers who regularly need mockups, UI assets, or quick visual references mid-build, that frictionless access is genuinely useful — historically, this kind of thing meant a separate tool, a separate bill, and a workflow break.

The proactive mode is worth calling out separately. Using context from connected plugins, memory, and active projects, Codex can now suggest where to start a workday or pick up a paused task. It pulls in Google Docs comments, relevant Slack threads, Notion pages, and codebase context, then surfaces a prioritized action list. That's the feature that crosses from 'better autocomplete' into 'agent that runs your morning.'

Is Codex Swallowing OpenClaw?

The feature set lands on top of some loaded context. OpenClaw — the open-source personal agent framework built by Austrian developer Peter Steinberger — went viral in early 2026, pulling 60,000 GitHub stars in 72 hours and being compared widely to a personal AI operating system. A lot of what Codex now does, OpenClaw was already doing.

Steinberger joined OpenAI in February 2026 to lead personal agent development. Sam Altman, Mark Zuckerberg, and Satya Nadella all reached out after OpenClaw's rise. The project moved to an open-source foundation with OpenAI as financial sponsor. Before the hire, Anthropic had sent Steinberger a trademark complaint over the original name 'Clawdbot' — which kicked off two chaotic rebrands and, observers said, accelerated his move to OpenAI. OpenClaw had been running primarily on Anthropic's Claude models at the time.

The irony is thick. Anthropic's own answer to Codex is Claude Code — a terminal-based agentic coding assistant that reads entire codebases, edits files, runs tests, and commits to GitHub. Anthropic added its own computer use feature for Claude in March, available as a research preview for Pro and Max subscribers on macOS. Both tools are converging fast on the same destination: an agent that knows your stack, knows your context, and acts without being micro-managed.

What Does the Codex Update Mean for Developers?

The rollout started April 16 for desktop users signed in with ChatGPT. Personalization features and computer use are not yet available in the EU or UK — a regional carve-out that will draw scrutiny given how large the European developer market is, and how vague the timeline for access remains.

3 million weekly active developers is the number OpenAI leads with, and it matters. That's a moat, assuming retention holds. But packing computer control, browser access, image generation, plugin orchestration, and proactive scheduling into a single app creates real complexity. The risk isn't that Codex lacks features. The risk is that it starts to feel like too much — too many surfaces, too many permissions, too much to configure before you get anything useful done.

OpenAI frames the goal as narrowing 'the gap between what people can imagine and what they can build.' That's the right ambition. Whether a feature-dense desktop app is the right vehicle for it is the open question most developers will answer by the end of the month.

Frequently Asked Questions

What is OpenAI Codex computer use?

OpenAI Codex computer use is a background feature that lets the Codex agent see your screen, control the cursor, and click or type inside any Mac application. Multiple agents can run simultaneously without interrupting the user's active workflow, according to OpenAI's April 16, 2026 announcement.

What new features did OpenAI add to Codex in April 2026?

OpenAI added background computer use, an in-app browser, native image generation via gpt-image-1.5, over 90 new plugins including Atlassian Rovo and GitLab Issues, a proactive mode, multiple terminal tabs, SSH connections to remote devboxes in alpha, and a summary pane for tracking agent activity.

How does image generation work in OpenAI Codex?

Image generation in Codex is powered by gpt-image-1.5 and is built directly into the coding workflow. No separate API key is required. Usage is covered by a standard ChatGPT account subscription, removing the need to switch tools or incur additional costs for basic image generation tasks.

Is OpenAI Codex available in the EU and UK?

Partially. The core Codex update rolled out on April 16, 2026 for users signed in with ChatGPT. However, personalization features and computer use are not yet available in the EU or UK. OpenAI has not provided a specific timeline for when those regions will receive access to those capabilities.